There is a conversation happening about your brand right now that you are not part of.

It is not taking place in a newsroom, on social media, or in a competitor’s boardroom. It is taking place inside the large language models that hundreds of millions of people now consult as their first port of call for information — ChatGPT, Gemini, Perplexity, and the AI overviews embedded directly into Google search. Every time someone asks one of these platforms about your company, your industry, or your leadership team, they receive an answer. That answer was assembled without your input, is presented without a byline, and carries the implicit authority of a system that most users instinctively trust.

The question is not whether AI is shaping your reputation. It already is. The question is whether you have any influence over what it says.

How We Got Here

To understand why this matters so much right now, it helps to understand how quickly the landscape has shifted.

Two years ago, reputation management was still fundamentally a search engine problem. The discipline was built around Google’s ten blue links — understanding which results ranked for your name, managing what appeared on page one, and pushing unfavorable content down through the weight of better assets. That model was imperfect but navigable. The rules were relatively transparent, the levers were understood, and the results were visible.

Then generative AI entered the mainstream, and the model broke.

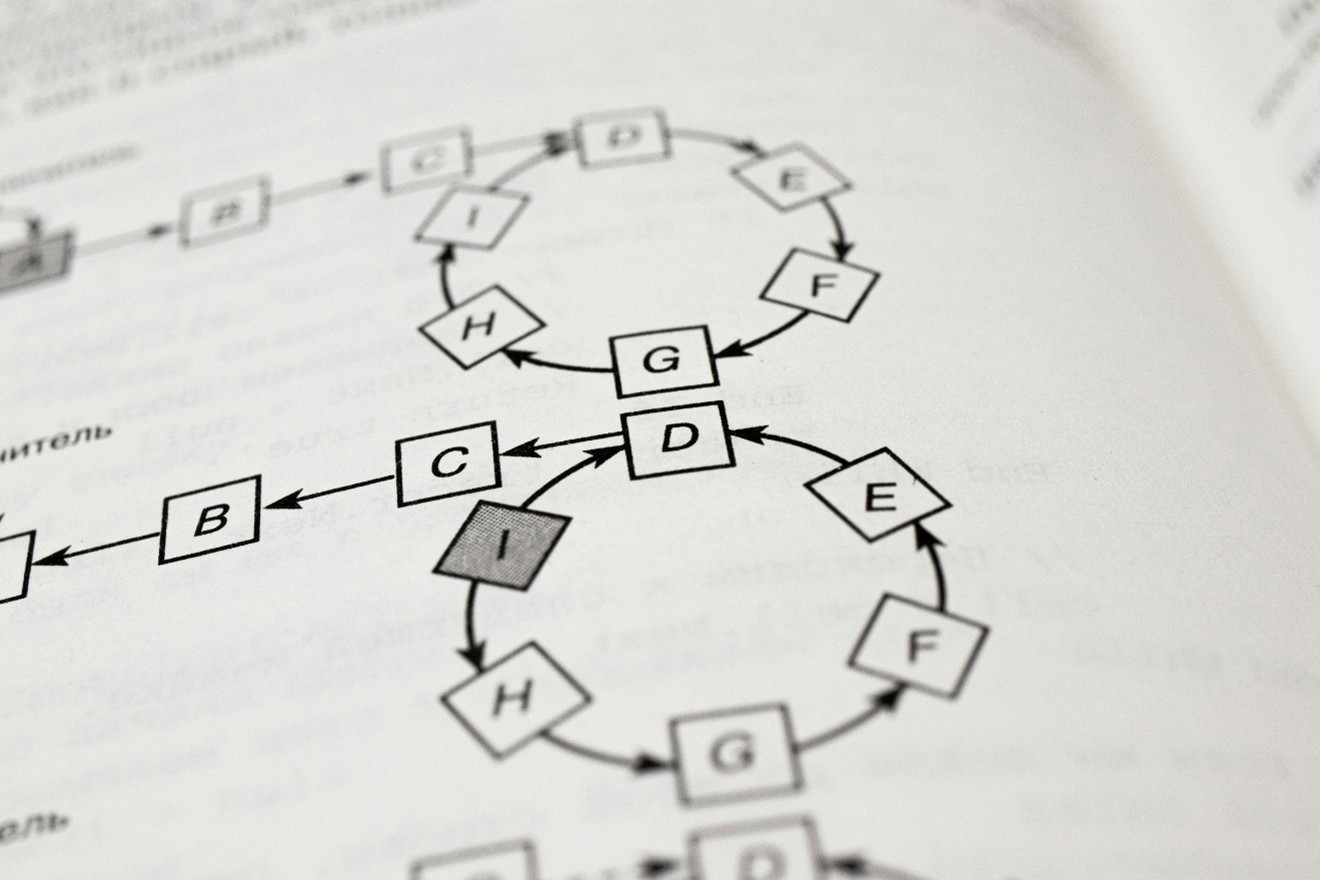

AI platforms do not return a list of links for the user to evaluate. They return a synthesized answer — a confident, fluent paragraph or series of paragraphs that draws on a vast and largely opaque body of training data and real-time retrieval. The user does not see the sources being weighed. They do not see the negative forum post from 2020 that is quietly influencing the summary. They do not see the inaccurate Wikipedia paragraph that has been baked into the model’s understanding of your company for months. They see an answer, and they tend to believe it.

This is not a flaw in the technology. It is a feature that creates an entirely new category of reputation risk — and an entirely new discipline for managing it.

The Three Ways AI Gets You Wrong

In our work with brands and executives across Europe, MENA, and Africa, we have identified three distinct ways that AI platforms misrepresent the organizations and individuals they describe. Understanding them is the first step to addressing them.

Temporal distortion. AI models are trained on data with a cutoff date, and even those with real-time retrieval capabilities weigh established sources heavily. This means that outdated information — an old controversy, a former business relationship, a leadership role you left three years ago – can persist in AI-generated summaries long after it has ceased to be relevant. The model does not know that things have changed. It knows what it was trained on.

Source imbalance. AI platforms draw from the sources that are most authoritative and most frequently cited in their training data. For most organizations, this means that a handful of high-profile sources — a major profile piece, a Wikipedia entry, a prominent industry database — carry disproportionate weight in shaping the AI’s understanding. If those sources are thin, inaccurate, or negative, the AI’s summary will reflect that. Volume and authority of positive content is not a vanity metric in this context; it is a structural defense.

Hallucination and conflation. AI models occasionally generate confident-sounding information that is simply wrong — invented facts, confused identities, conflated organizations. For individuals and companies with common names or limited digital footprints, the risk of being misrepresented in this way is significant. And unlike a factual error in a news article, an AI hallucination has no byline to challenge and no editor to contact.

What Answer Engine Optimisation Actually Means

The emerging discipline designed to address this is called Answer Engine Optimisation — AEO — and it represents the most significant evolution in reputation management since the rise of social media.

Where traditional SEO was concerned with ranking, AEO is concerned with citation. The goal is not to appear in a list of results but to be the source that AI platforms draw from when they construct their answers. This requires a fundamentally different approach to content and digital asset strategy.

It means producing content that is structured, authoritative, and written in a way that is easily parsed and cited by AI systems. It means ensuring that your brand is accurately and substantively represented in the knowledge databases – Wikidata, Google’s Knowledge Graph, industry-specific repositories – that serve as foundational reference points for AI models. It means building a volume of consistent, high-quality owned and earned content that collectively signals to AI systems what you stand for, what your expertise is, and how you should be described.

It also means monitoring. Not just Google alerts and media monitoring – though those remain important – but active, regular querying of the major AI platforms to understand what they currently say about your brand, and tracking how that changes over time as models are updated and retrained.

The Window That Is Open Right Now

Here is what most organizations do not yet appreciate: we are in an early period of AI reputation management where the competitive advantage available to early movers is unusually large.

Most brands have not yet begun to think systematically about how they are represented in AI outputs. Most have not audited what ChatGPT or Gemini says about them. Most have not taken steps to optimize their content and knowledge infrastructure for AI citation. This means that the organizations that move now — that build their AEO strategy before it becomes standard practice — will establish a presence in AI outputs that will be significantly harder for competitors to displace later.

In five years, AEO will be table stakes. The firms that treat it as a priority today will have compounded advantages that latecomers cannot easily replicate.

The Uncomfortable Question

We ask every new client the same question at the start of an engagement: What does ChatGPT say about you?

Most do not know. Some are pleasantly surprised when they check. Many are not. Almost all of them find something — an inaccuracy, an outdated reference, a gap where there should be substance — that they had no idea existed.

The algorithm has an opinion about you. It is sharing that opinion with your prospects, your investors, your potential hires, and your regulators, often before any human interaction takes place. In a world where first impressions are increasingly formed by machines rather than people, the organizations that understand and actively shape those impressions will have a profound and durable advantage over those that do not.

The only question is which side of that divide you want to be on.